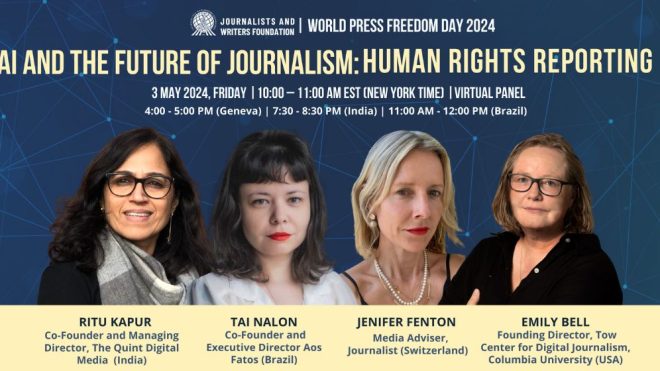

WORLD PRESS FREEDOM DAY 2024 | VIRTUAL EVENT

AI AND THE FUTURE OF JOURNALISM: HUMAN RIGHTS REPORTING

3 May 2024, Friday | 10:00 – 11:00 AM EST

On the occasion of the World Press Freedom Day 2024, the Journalists and Writers Foundation organized a virtual event “AI and the Future of Journalism: Human Rights Reporting” to raise awareness of the implications of artificial intelligence for the right to access information and create a platform for media professionals to share their up-to-date experiences as they navigate through working in collaboration with AI in transforming standards and ethics of journalism.

In her opening remarks, Cemre Ulker, the Representative of the JWF to the UN Department of Global Communications, highlighted the intersection of artificial intelligence, journalism, and human rights reporting. As an advocate for human rights closely aligned with the UN’s priorities, Ms. Ulker emphasized the critical nature of this topic, especially in light of the UN’s upcoming Future Summit in September. She underscored the dual nature of AI as both advantageous and challenging, particularly in its implications for peace, security, and the spread of misinformation. Ms. Ulker stressed the vital role of journalism and access to credible information sources in upholding human rights, especially during times of democratic elections.

Following Cemre Ulker’s opening remarks, as the moderator of the session, Jennifer Fenton underscored the significance of World Press Freedom Day this year, particularly amid ongoing conflicts like the Israel-Gaza War, which has tragically claimed the lives of numerous journalists. Ms. Fenton stressed the urgent need to safeguard press freedom, especially at the times of increasing global conflicts and political tensions posing significant threats to journalists worldwide. She highlighted how the outcomes of elections across the globe could shape the future landscape of journalism and media freedom.

Following Cemre Ulker’s opening remarks, as the moderator of the session, Jennifer Fenton underscored the significance of World Press Freedom Day this year, particularly amid ongoing conflicts like the Israel-Gaza War, which has tragically claimed the lives of numerous journalists. Ms. Fenton stressed the urgent need to safeguard press freedom, especially at the times of increasing global conflicts and political tensions posing significant threats to journalists worldwide. She highlighted how the outcomes of elections across the globe could shape the future landscape of journalism and media freedom.

Turning to Emily Bell, the Founding Director of the Tow Center for Digital Journalism at Columbia University, Jennifer Fenton posed a crucial question about the transformation of the journalism landscape due to AI. Ms. Bell delved into two main aspects: external forces and internal challenges and opportunities. She highlighted economic viability as a key concern, noting how AI could undermine journalism’s sustainability, jeopardizing its role in upholding human rights and reporting abuses. Emily Bell also addressed the proliferation of disinformation facilitated by AI, posing a threat to journalism’s integrity. She raised concerns about surveillance and data sources, cautioning against power concentration in tech giants, exacerbating inequalities in the global media landscape.

Despite challenges, Emily Bell acknowledged AI’s potential opportunities in enhancing human rights reporting through remote sensing and data gathering. However, she cautioned against prioritizing ethical considerations in AI tool development. Jennifer Fenton echoed Ms. Bell concerns, noting how AI’s benefits are often skewed towards regions in the global North, exacerbating disparities in the media industry.

Despite challenges, Emily Bell acknowledged AI’s potential opportunities in enhancing human rights reporting through remote sensing and data gathering. However, she cautioned against prioritizing ethical considerations in AI tool development. Jennifer Fenton echoed Ms. Bell concerns, noting how AI’s benefits are often skewed towards regions in the global North, exacerbating disparities in the media industry.

Tow Center`s Founding Director also highlighted concerns raised by researchers at Google several years ago. She expressed worry about potential inequalities embedded into AI tools during development, making them susceptible to misuse. Emily Bell emphasized the persistent issue of biases in data sets and researchers’ subsequent dismissal, illustrating challenges in addressing such issues within big tech companies and stressed the importance of newsroom expertise in understanding AI tool operations and vulnerabilities. She mentioned conducting “fail tests” on AI tools with students to assess effectiveness.

Despite challenges, Emily expressed hope in ongoing conversations about AI ethics in journalism. However, she expressed concern about technology companies’ rapid integration into newsrooms through content and tools deals, primarily benefiting large publishers. She also highlighted the importance of ethical guidelines in addressing issues like data sourcing in large language models and the need for industry-wide awareness and action to ensure responsible and inclusive AI technology development. Emily Bell emphasized the importance of holding tech companies accountable, advocating for grassroots resilience-building efforts and collaborative regulation at the local level. She reiterated the need for a multi-faceted, society-wide approach to AI regulation, combining external accountability mechanisms, regulatory intervention, and grassroots efforts to address ethical and legal challenges.

Tai Nalon, Co-Founder and Executive Director of Aos Fatos based in Brazil, began her reflections by acknowledging AI’s long-standing integration in journalism, particularly in streamlining internal newsroom processes. She highlighted how AI tools are already automating tasks like content generation, summarization, and data analysis across various topics such as weather reports, stock market updates, and monetary policy decisions. Tai Nalon noted the increasing accessibility of AI, especially natural language generation (NLG), empowering journalists to utilize it in their work. However, she stressed the importance of journalists adapting and becoming proficient in AI operations, as it will inevitably shape newsrooms’ future. Ms. Nalon discussed AI’s potential applications, from optimizing headlines for search engine rankings to suggesting topics based on social media trends and simplifying large datasets for clearer presentation.

Tai Nalon, Co-Founder and Executive Director of Aos Fatos based in Brazil, began her reflections by acknowledging AI’s long-standing integration in journalism, particularly in streamlining internal newsroom processes. She highlighted how AI tools are already automating tasks like content generation, summarization, and data analysis across various topics such as weather reports, stock market updates, and monetary policy decisions. Tai Nalon noted the increasing accessibility of AI, especially natural language generation (NLG), empowering journalists to utilize it in their work. However, she stressed the importance of journalists adapting and becoming proficient in AI operations, as it will inevitably shape newsrooms’ future. Ms. Nalon discussed AI’s potential applications, from optimizing headlines for search engine rankings to suggesting topics based on social media trends and simplifying large datasets for clearer presentation.

She emphasized the necessity for creativity and pragmatism in integrating AI tools into newsroom workflows without burdening journalists. Tai Nalon highlighted the possibility of text formats evolving into more conversational styles, influenced by the rising popularity of chatbots as a means of communication. She predicted a shift towards more interactive and conversational journalism formats in the coming months and years, aligning with modern audience preferences. She stressed the importance of journalists, managers, and editors creatively leveraging AI technologies while being mindful of potential challenges and disruptions to the journalism industry.

In response to Jennifer Fenton question about leadership in regulating digital platforms and the necessity for cooperation across the field, Tai Nalon shared country-based insights from Brazil’s ongoing regulatory discussions. Over the past four years, there has been growing recognition of the need to regulate digital platforms, particularly following the January 8 attacks in Brazil last year.

Tai Nalon from Brazil also emphasized regulation’s critical role in ensuring transparency from digital platforms, highlighting the increasing opacity of platforms like Twitter and YouTube. These platforms have restricted access to APIs, hindering data access crucial for understanding content distribution and combating misinformation. She stressed the importance of holding platforms accountable to safeguard user, publisher, and social participant rights within regulatory frameworks that balance freedom of speech with accountability. She also highlighted the complexity of regulatory discussions in Brazil, shaped by historical legacies, external influences, and the need to balance freedom of speech with accountability. She emphasized regulation’s imperative to ensure transparency from digital platforms and protect user rights in an increasingly complex online landscape.

Ritu Kapur, the Co-Founder and Managing Director of the Quint Digital Media based in India provided insights from the perspective of a newsroom in India regarding the risks and opportunities associated with AI in relation to human rights. Ritu Kapur acknowledged the complexity of assessing AI’s impact, describing it as a multifaceted issue operating on various levels simultaneously. She expressed concern about the economic implications for newsrooms, particularly those already facing challenges in survival, highlighting the potential for AI to deepen control by big tech companies, reminiscent of the influence search algorithms had on generating news content based on trending keywords.

Ritu Kapur, the Co-Founder and Managing Director of the Quint Digital Media based in India provided insights from the perspective of a newsroom in India regarding the risks and opportunities associated with AI in relation to human rights. Ritu Kapur acknowledged the complexity of assessing AI’s impact, describing it as a multifaceted issue operating on various levels simultaneously. She expressed concern about the economic implications for newsrooms, particularly those already facing challenges in survival, highlighting the potential for AI to deepen control by big tech companies, reminiscent of the influence search algorithms had on generating news content based on trending keywords.

Ritu Kapur cautioned against the proliferation of low-quality content generated at speed, contributing to information overload and clutter in the media landscape. She emphasized addressing equity gaps in AI literacy within newsrooms, especially concerning the detection and combating of disinformation driven by AI tools. Ms. Kapur underscored the need for journalists to continuously upskill and understand AI’s role in news content generation to effectively counter misinformation.

She pointed out the potential disparity between large legacy newsrooms and small digital-only outlets in accessing and developing AI technologies tailored for newsroom use. The Quint Digital Media`s Co-Founder also stressed the importance of engaging with AI technology while also being mindful of its potential consequences, advocating for a nuanced approach that balances adoption with critical awareness. She highlighted the necessity for newsrooms to adapt and integrate AI tools while addressing equity gaps and ensuring ethical journalistic practices are upheld in the face of evolving technological landscapes.

Following the moderator`s question to Ritu Kapur regarding AI’s potential in enhancing media coverage, fact-checking, and promoting democratic participation, Ms. Kapur reflected on her experiences, particularly in the context of Indian elections. She noted some positive applications of AI in the campaigning process, such as real-time translation of speeches into multiple languages to reach voters across diverse linguistic and regional backgrounds, aiming to provide voters with more access to correct information and address hyperlocal issues effectively. However, Ritu Kapur also expressed concerns about the misuse of AI in generating propaganda content, especially by exploiting the vulnerabilities of social media platforms. She highlighted the risk of AI being used to disseminate disinformation at a rapid pace, particularly narratives targeting minorities. Despite the current limitations of AI accessibility and effectiveness in Indian elections, Ms. Kapur expressed apprehension about the potential escalation of hate-driven agendas by the government post-elections.

As the Q&A session with the audience commenced, Jennifer Fenton relayed a question from a participant from Bangladesh to Ritu Kapur on the challenge of distinguishing between AI-generated content and human-generated content, particularly highlighting the potential difficulty for consumers, readers, or viewers to discern between the two. Ms. Kapur emphasized the critical importance of media literacy in combating disinformation, especially in the era of AI-driven content. She stressed the need for engaging and accessible media literacy campaigns, noting that while training individuals to detect deep fakes may have limitations, concerted efforts involving collaboration between big tech, corporations, and possibly governments could amplify the reach and impact of media literacy initiatives. Ritu Kapur also touched upon the delicate balance between regulating AI and preserving press freedom, suggesting that broader regulatory approaches encompassing competition laws, data privacy regulations, and copyright laws could complement efforts specifically targeting AI.

Later, Jennifer Fenton directed a question from the audience to Tai Nalon, inquiring about immediate threats to journalists. Ms. Nalon highlighted the concerning trend of virtual mobbing and online harassment facilitated by AI-driven technologies, particularly posing significant risks to female journalists. She emphasized the ease with which fake profiles can proliferate on platforms and the limited action taken by some platforms to address this issue. Aos Fatos` Tai Nalon cautioned against the potential for generative AI to fabricate identities and attribute false actions to journalists, underscoring the urgent need for platforms to prioritize addressing such threats and ensuring the safety of journalists in the digital space.

In response to a question from a participant in South Africa regarding the intersection of AI with gender and marginalized groups, Emily Bell emphasized that the issue extends beyond AI, reflecting broader power dynamics. She highlighted the disproportionate targeting of women, particularly non-white women, in instances of harassment and defamation, underscoring the systemic underrepresentation of these groups in positions of power globally. Ms. Bell noted the lack of diversity in leadership roles within top AI companies, illustrating how power imbalances influence the design and use of AI technologies. She cited examples such as biased facial recognition technology in the criminal justice system, which struggles to accurately identify black individuals. Emily Bell stressed the importance of media literacy and journalistic vigilance in scrutinizing these systems and holding power to account. She urged journalism to embrace innovation as an opportunity for reform, advocating for inclusive reporting that amplifies the voices of marginalized communities and scrutinizes power structures at all levels. Ms. Bell cautioned against uncritical acceptance of innovation, emphasizing the need to ensure that progress in journalism aligns with principles of accountability and representation for all.